🌻 notes on AI, labor, and China

an expansion pack for my NYT story on the "permanent underclass"

I have a new reported essay in the New York Times on AI, work, and Silicon Valley’s fear of the “permanent underclass.” It took 2.5 months, is 4,600 words, involved interviews with 50+ technical researchers, economists, and policy experts and policymakers; and is all-around the most ambitious piece I’ve attempted yet. (It’s also why I’ve been quieter here while finishing that up.)

I hope you read the piece in full (gift link for the subs <3), because it involves a bunch of deeper reporting on the AI labs’ perspectives and why this might be politically salient. I have been pleasantly surprised at the reception—I’ve received nice notes from Democratic and Republican politicians, researchers at every major lab, hardcore AI skeptics, and notoriously cranky economists. Most critics either seemed to struggle with basic reading comprehension, or were polite enough to subtweet instead of dunking (I’ll address them below!).

Here’s an excerpted summary:

Most people I know in the A.I. industry think the median person is screwed, and they have no idea what to do about it. I live in San Francisco, among the young researchers earning million-dollar salaries and the start-up founders competing to build the next unicorn. While Silicon Valley has long warned about the risk of rogue A.I., it has recently woken up to a more mundane nightmare: one in which many ordinary people lose their economic leverage as their jobs are automated away.

Most economists and A.I. experts do not expect [the most extreme] scenario, but the persistence of the permanent underclass idea should concern all of us. First, because it signals how much collateral damage the A.I. companies will tolerate en route to A.G.I. And second, because the production of a social underclass is a policy choice. Instead of waiting for impact, we need to think seriously — now — about how we plan to support workers through A.I. disruption.

The rest of this post is a ramblier, weedsier “expansion pack” for this story:

Perspectives on AI and work from my trip to China

Responses to job loss skeptics like Altman, Andreessen, and Klein

A Chinese perspective on AI and work

I finished writing the NYT piece while traveling across China with friends and family. It’s a fascinating place to be thinking about AI and jobs because the country has been experiencing high levels of urban youth unemployment for years—and not because of AI. I noticed after my China trip last year that there are striking similarities in the way that twenty-somethings in both countries talk/joke about economic precarity.

Starting in 1999, the Chinese State Council made a big push to upskill the population via mass college enrollment. This was an important bet for the country’s development, but it also skewed the balance of human capital vis a vis available jobs: China now has an oversupply of young knowledge workers and an undersupply of factory workers. It turns out many college grads would rather sit around unemployed than do backbreaking manual work.1 Because of low levels of household spending, the Chinese service sector is smaller than in similarly sized economies. And because Chinese policy and finance favor state-linked firms, private companies face a tougher time growing.

All this unemployment is a big problem for Beijing, which believes that gainful work is both a character-forging moral responsibility and a useful way to keep the population locked in (metaphorically, literally) and out of the streets. Thus, I expect China to be more active than the US in promoting employment—and a place whose policies we should carefully observe.

So while I was theoretically in China to learn about the open-source AI and robotics ecosystem, I largely spent my time asking those I met: What do all these unemployed young people do?

One countercultural option is tangping: lying flat, doing nothing, and rejecting the societal pressure to 996 your way to the top. Notably, China has a much lower cost of living than the US, which makes it possible to survive on much less. Some of these young people are artists or creatives, working just a few hours a day while loitering with friends at internet cafes. When I was in Dali in 2024, we came across many such young people doing tarot on the streets. But as much as the meme has caught fire abroad, tangping is still a niche phenomenon.

China is also experiencing a care economy boom. Elder care roles are growing given the aging population: some folks find work helping senior citizens navigate digital services, visit the doctor, or run other errands. I also learned about the concept of “full-time children”: young adults who move back in with their own parents and grandparents to do housework and keep them company in return for pay. While this seems sweet in the short-term—I’m sure many aging parents are happy to have their kids back—it looks like Gen Z riding on the boom generation’s savings, not finding a sustainable path to building wealth of their own.

Gig work is another option—often delivery driving, or waimai, for e-commerce giants Meituan or Ele.me. Urban Chinese lifestyles run on waimai: you can get food, groceries, or goods delivered for just a few yuan. Pedestrians can’t step onto the street without getting nearly run over by a blue or yellow-helmeted scooterer. These workers seem to have memorized every mall layout and office park, racing up stairs and down alleys to get tote bags of noodles and milk tea to their hungry destinations. It’s intense, often brutal work, riddled with safety risks, all to earn the meager wages of around $14 USD per day.

Only the luckiest college graduates are awarded the “iron rice bowl” of a secure public sector or state-owned enterprise job, which come with perks like subsidized housing and protections from layoffs. While SOEs and government offices are notoriously bloated, the need for social stability makes the government reluctant to trim the workforce.

From my shallow observer’s point of view, many public sector jobs already looked like jobs programs. China’s parks and subway stations are spotless because they are filled with far more workers than they seem to need. Gardeners clipped every stray twig from hedges, street cleaners swept unsullied sidewalks, and security guards made unconvincing shows of checking subway riders’ bags. Despite these inefficiencies, it’s hard to overstate the quality of life boost one gets from having access to free, clean, gorgeous parks lined with leafy trees, flower beds, refreshment stations, and regular pop-up entertainment. I’ve heard whispers in the US about doing a “Works Progress Administration for the AI era”—reallocating workers to beautify our physical world and public infrastructure, perhaps through programs like a national service. Strolling along the pristine West Lake in Hangzhou or the North Bund in Shanghai, this doesn’t seem like such a bad idea to me.

AI-specific job loss fears still seem less severe in China than in the US. For example, the robotics companies we met gladly explained that their value proposition was automating human workers. When we visited Galbot’s 24/7 pharmacy, the comms team noted that their robot, unlike humans, did not require training, sleep, or breaks; and that Galbot can do night shifts that humans won’t. (The pharmacy was really cool, and seems like a real consumer surplus.) Multiple AI researchers we met smiled as they said they were eager to build their replacements—in fact, that’s how they defined AGI. Others brought up fully automated dark factories as a solution to maintaining Chinese economic growth as birth rates dropped.

So why isn’t China experiencing the same level of panic about AI and jobs?

First, as mentioned above, Chinese workers have faced cutthroat job competition and rampant economic anxiety well before AI hit the scene. When I told my 24-year-old cousin that American new grads felt like they couldn’t find jobs because of AI, he scoffed and said: “In China, we can’t find jobs because there are too many people.” Hu Anyan, the author of I Deliver Parcels in Beijing and former waimai worker, said that his blue-collar colleagues are under “so much livelihood pressure that they have no time to think about” AI replacement.

Second, because of this competitive environment, most Chinese desk workers instead focus on how they can leverage AI mastery to outcompete peers. Some call this “techno-optimism,” but I think it’s closer to techno-determinist pragmatism: everyone assumes AI is here to stay, so individuals should wield ChatGPT/OpenClaw/Doubao2 to avoid falling behind. Playing conscientious objector would only disadvantage your own prospects. A translated WeChat analysis of my NYT piece contrasts Silicon Valley’s political concerns about AI inequality with China’s “save yourself” mentality: “Domestic [Chinese] articles discussing AI fall into two categories: one emphasizes how powerful AI is, urging you to learn it quickly; the other emphasizes how terrifying AI is, urging you to get on board quickly.”

Third, China’s economy is less concentrated in knowledge work overall; and AI has less of a cost advantage when human labor is already so cheap. In a translated comment on a Zhihu thread about my piece, one Chinese netizen wrote that “The US economy is largely virtual, so there’s a sense of impending doom with AI. In contrast, our country’s economy is primarily based on the real economy.” Another user adds that “Jobs with a monthly salary of 3,000 RMB would be unprofitable to replace with AI… However, in the US, jobs with annual salaries of over 100,000 USD are the hardest hit.”

Finally, the Chinese government seems more willing to intervene to protect workers from AI job loss. My aunt, who works at a SOE, told me that she knows that AI means that “two people can now do three people’s jobs”—but she doesn’t think her firm would do layoffs anyway. In an analysis of Chinese AI/labor policy by Matt Sheehan, he wrote that a recent labor arbitration case in Beijing “ruled that firing someone because AI can now do their job constitutes a violation of the Labor Contract Law.” He also found that China’s AI+ plan and other follow-up policies were more sophisticated than expected on these questions.3

It’s worth caveating that censorship distorts public narratives about AI impacts. The government has recently clamped down on online discussions of economic pessimism, and in 2023 paused releasing unemployment data when the numbers got too high. Even narrow topical censorship diffuses broadly throughout culture: on average, Chinese tech workers we met seemed less comfortable talking about social issues—not necessarily because they weren’t allowed to, but because they seemed less familiar with having these conversations. If readers know more about how China is experiencing and managing AI’s labor impacts, please send me a note!

(During the trip, we met teams from basically every important Chinese AI and robotics lab, which was an incredible opportunity. Thank you Caithrin for running through (great) walls to make this happen. For more AI-focused takeaways on the trip, read write-ups from fellow travelers Lily Ottinger and Kai Williams here, Kevin Xu here, afra Wang here, Nathan Lambert here, Florian Brand here, and Azeem Azhar and Hannah Petrovic here.)

Rebuttals to rebuttals to my NYT piece

I still have no clue how AI will transform work in the very long term, and I don’t trust anyone who says they confidently do. I could imagine that 100 years from now, we’re all gainfully employed as life coaches and party hosts, or that we’re enjoying endless summer on a token-taxed UBI, or that the permanent underclass is real and humans are all slaving away as meat robots (or dead).

But I am concretely worried about the next few years, and wouldn’t mind researching solutions for worst-case scenarios too. I suspect AI will cause serious labor disruption to some roles/sectors—as any freelance illustrator or new college grad can tell you—and that would be both morally and politically disastrous without policy action. You don’t have to believe in an AI “permanent underclass” to feel urgency on this subject.

Therefore, I want to answer some of the anti-jobs-doomer takes that the NYT piece spawned (though most weren’t presented as direct responses). These articulate a few main counter-arguments:

AI will be a tool that augments workers

Jevons paradox means there will be more jobs than ever

The human premium will preserve human work

Each has some validity, but I still find myself more uncertain—and thus less sanguine—than their proponents. (I’ve also discussed these views on podcasts like Bankless and American Inequality, and am footnoting a summary of my views.4)

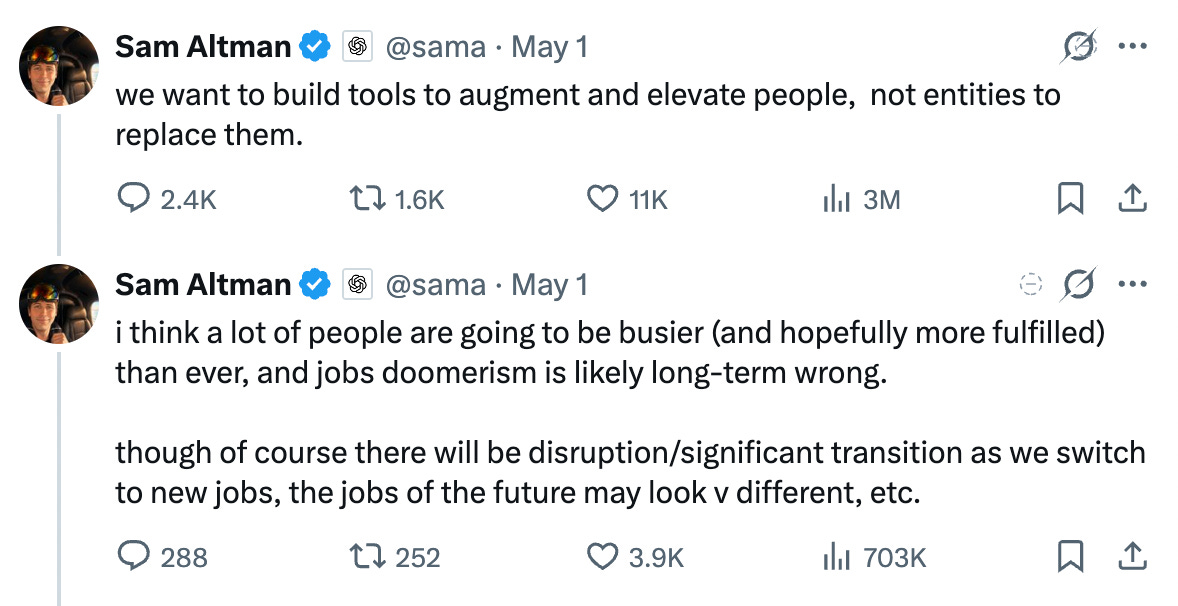

Contra Altman on tool AI

In conversations about AI and jobs, people often talk about “augmentation vs. automation” or “tool AI vs. agent AI.” The former is seen as socially good—it’s when AI helps a worker do their job better/faster—and the latter is bad—it’s when the AI replaces the whole role at once. Some people, from Daron Acemoglu to Sam Altman, have proffered that we should fend off job displacement by choosing to build AI that augments, not automates. Take this tweet thread @sama posted after the NYT piece:

Unfortunately, I think building “tool AI” is basically cope. Let’s look at two scenarios:

Imagine a senior designer whose mandate is to work with executive leadership to develop a brand guide from scratch (colors/fonts/motifs/etc). Then, imagine a junior designer at the same firm whose job is to create digital illustrations for company blog posts based on those guidelines. Current image-generation AI might merely assist the senior designer—allowing them to mock up a wide range of early-stage ideas for team feedback—while totally replacing the need for the junior, since the editorial team can just prompt the image AI directly. The same technology that augments the senior fully automates the junior.

Imagine a rocketship startup that has a far longer list of features than it has time to build them. It can’t hire enough engineers to keep up with user growth. When Claude Code shows up, the engineering team gets more productive, but the company keeps hiring human devs too. Then, imagine a legacy software firm that has seen declining growth for the last three years. It was already considering layoffs, but the arrival of Claude Code makes the decision easier. The firm cuts the bottom 50% of the engineering workforce and asks the remaining engineers to make up the difference with AI. It does not hire more. The same technology that augments workers in a growing firm automates workers in a shrinking one.

Put another way: “Augmentation vs. automation” is real, but it is a product of the firm/social context, not of the technology itself. I don’t believe that OpenAI can or will restrict how their customers use their AI products to “augmentative” uses only. Regardless of Altman’s stated intentions, people are going to hire fewer copywriters and junior devs as a result of ChatGPT and Codex. That is not a decision that OpenAI gets to make.

Furthermore, all the incentives in AI point toward the development of agents, not tools. gwern has written a detailed post on why this is true: First, because it’s generally cheaper to remove the human from the loop. And second, because agents will get smarter than equivalent tools—they can gather and learn from real-time data or reward signals. It’s why self-driving cars like Waymo (a narrow agent) will eventually outperform a human cab driver with Google Maps (a human with a tool). The labs are under a tremendous amount of financial pressure to build smarter, more useful AIs. I don’t see faux-principled positioning around “tools” changing their actual product goals.

Finally, even if AI’s jaggedness keeps it a complement to humans vs. a one-to-one replacement in current firms, we will see new firms reorganize themselves fully around AI rather than human labor—something that’s already happening at San Francisco startups and in China’s “OPCs,” or one-person companies. As these human-lean, AI-intensive firms suck up market share, we should expect labor share to drop.

Contra a16z on Jevons paradox

The most common argument against jobs doomerism is Jevons paradox: the cheaper something is, the more people will want it. Cheaper software leads to more demand for software. Cheaper therapy leads to more demand for therapy. When the cost of something drops, it expands the potential market for that thing. Demand is not fixed; it is elastic, perhaps infinite. Jevons paradox was true with financial analysts, it was true with radiologists, and it even looks true with the trajectory of software hiring so far; as a recent blog post by David George at a16z refuting the “permanent underclass” theory says. It critiques jobs doomers for falling prey to the lump of labor fallacy: they fail to realize that the whole economy will grow once new machine labor enters.

Jevons and the lump of labor critique assume that more demand for stuff equals more demand for humans. This historically has been true, as long as human bottlenecks exist. But the pace and generality of AI progress could confound these assumptions.

First, AI may generalize to new tasks and skills faster than people can retrain. That would mean fewer humans are needed to produce the same thing, “skill-biased technological change” favors superintelligent machines, and employment can go down even as demand goes up. After all, Jevons paradox says there will always be more demand, not that we need human workers to fulfill it. To look at an example: Claude Code is currently better than junior engineers but not seniors, so demand for senior engineers is rising, even if demand for juniors is falling. But if coding agents advance at current rates, we may no longer need senior engineers in two years—humans can get fully from the software production loop.

Second, demand may be dampened by job displacement and inequality. If the riches from AI flow to too few people, those wealthy few will hit limits on their personal consumption and save/invest the rest. Rich people have the same 24 hours in a day as the rest of us: they might hire a few personal trainers and life coaches, but only so many. The top 1% can’t consume as much as a large middle class, and redistribution would be necessary to keep consumption going. China today is a useful analogue: income inequality limits household spending, which then constrains the size of the services economy. The viral Citrini essay is an extreme version of this thesis. Alex Imas has also fleshed out a “negative economic growth” scenario here, though he argues it is unlikely and preventable with policy action.

Contra Klein and Imas on the human premium

A complementary argument is that demand will shift into areas of scarcity like the “relational sector”: care work, coaches and tutors, private assistants. “AI may reduce the commodity sector’s share of expenditure and increase the share going to goods and services where the human element remains visible and valuable,” wrote Alex Imas in a piece exploring this scenario.

Ezra Klein, citing and building on Imas’s writing in a recent column, shared examples from his own use of chatbots for editing, health, and therapy:

The better the A.I. got, the more I had to discuss with the humans in my life. The A.I. thought my symptoms were concerning, so I made an appointment with my doctor (allergies, it turned out); it had a good insight on a personal challenge, and that opened a new conversation with my therapist; it allowed me to validate a research idea, and that opened up a new question to explore with my editor; A.I. has made it possible to caption videos easily, and now I work with more video editors.

But Klein’s example also reveals why I am less rosy than he is. I am currently a freelancer with limited access to costly human services. When my human therapist doubled her hourly rate last year, I decided to stop therapy and chat more with Claude instead. Given my minimal production budget, AI video tools have obviated the need for a human video editor. Just because something is scarce and high-status doesn’t mean that everyone will be able to afford it; in fact, many people won’t.

So we may end up in a world where most people are handmaidens to the super-rich, while most normal people settle for cheap digital alternatives. I think about the hourglass economy that already exists in San Francisco: every day, thousands of service workers commute into the city to make salads and clean offices for the high-paid tech workers who live here.

Finally, the human premium is simply less significant than most assume. Plenty of people—including the wealthy —prefer Waymos to Ubers, telehealth to doctors’ visits, and Netflix to live theatre. Digital services often beat humans in convenience, consistency, and quality. And sure, artisanal ceramicists/bookbinders/basket-weavers today command high prices for their craft, but these are dwindling niches rather than mass-employment sectors.

As Anthropic cofounder and fellow Substacker Jack Clark says in the NYT piece, future society could thrive with a larger relational sector—I would love to see a 1-1 student-teacher ratio, for instance—but I think directed policy action will be required to architect that world.

Labor’s saving grace may be the fact that most jobs are much less automatable than software, the only job that most AI researchers have ever had. Even broader, “real-world” task benchmarks like APEX and GDPVal test narrow tasks that are far from encapsulating the complexity of most jobs. That’s what keeps me from doomerism: We are still a far cry from full automation.

Yet “fine for most” does not mean “fine for all.” Nearly all the jobs optimists still acknowledge the possibility of a “painful transition,” as the euphemism goes. As the US learned from the China shock, the following deaths of despair, and the right-populist turn, even a few million job losses in a few cities can cause immense suffering to impacted workers—even if jobs are created elsewhere, and if national GDP goes up. When hospitals replaced steel in post-industrial Pennsylvania, not everyone was willing to retrain, and the new low-wage care jobs were as brutal as the factory work. These human consequences did not show up in economic forecasts or aggregate charts.

I will keep quoting Carl Benedikt Frey: “Most economists will acknowledge that technological progress can cause some adjustment problems in the short run. What is rarely noted is that the short run can be a lifetime.”

—Jasmine

For the jobs debate, it’s not just important to have open roles available, but to ensure those roles fit people’s skills and desires. During deindustrialization, many economists overestimated how many laid-off factory workers were willing to retrain or move. In China, it matters that college grads don’t want to do blue collar work.

Doubao, by Bytedance, is the most popular AI chatbot in China. They are also one of the only closed-source labs. Yet there’s almost no Western coverage or awareness of this app!

Some Chinese have more faith in their own country’s safety net compared to the US. “The wealth generated by AI can entirely be redistributed by the state to improve people’s livelihood and enhance the welfare of all citizens,” said one WeChat commenter. “During the Roosevelt era, America had this kind of influence. But what about today’s America? Haha, haha, haha, hahaha! The US president and his ‘family members’ are more concerned with how to chart K-lines and make big profits.” Another nationalistic commenter expressed a bleaker prediction: “A wave of Western refugees will soon flood into China. We need to prepare.” In reality, though, China’s welfare system is significantly shallower than the US’s.

What do I think is going to happen with jobs? My medium term views on AI and work are as follows (confidence is a soft ~50%):

I do not expect AI to cause a mass jobs apocalypse. Current AI is jagged, diffusion is slow, and most jobs have some property—e.g. physical tasks, social/relational tasks, union or regulatory protection—that makes automation hard. “Most jobs are way harder to automate than software” is the best hedge against job loss.

Still, I expect layoffs and hiring freezes in some knowledge work roles, such as software engineers, customer support staff, graphic designers, and copywriters. The usual arguments about lumps of labor and Jevons paradox can be outweighed if AI learns new skills faster than humans can reallocate, and if AI is cheaper too.

This will suck for impacted workers, especially new grads and older workers, who may lack the resources or willingness to retrain. We also don’t have examples of large-scale retraining programs that work.

We will still have “jobs” 5-10 years from now, but my guess is many will be quite different: much more in the “relational economy” (care/entertainment), and a shift from large firms to small/one-person businesses, gig work, and the informal economy. This will require us to rethink benefits, e.g. healthcare, to detach them from firms.

Wealth inequality will widen as returns to capital increase. This decreases political leverage and social trust among workers and ordinary people.

All of the above is an accelerant for political backlash, especially given the fragile and polarized state of American culture today. Even “small” or “transitory” amounts of disruption can be politically explosive.

I don’t think automation is in itself bad: in many cases, it means getting humans out of rote jobs, improving the quality/safety of services, or generally increasing productivity. But with this change comes the responsibility of supporting workers who are impacted through no fault of their own.

The "full-time children" detail isn't that surprising, with Gen Z riding on the boom generation's savings in the most literal possible way and the government having every incentive to not call it what it is. Reminds me of the laying flat 躺平 trend in China.

I'm glad you closed with the Carl Benedikt Frey quote too. "The short run can be a lifetime" makes the optimist case feel incomplete every time.

Great piece as always.

Appreciate the expansion pack! Exciting to see your path and your growth as a writer.

I´m a college student in Chile, a country that also underwent mass college enrollment in the 2000s. Not sure what AI means for young people in middle income countries.

As important income is, I also worry about the loss of meaning jobs provide. They provide social legibility, a way to feel useful as an adult.

Hannah Arendt wrote about the active and contemplative life. Maybe we need a new category?

+

What will the kind of people who used to go to software engineering do? What did these types of people do before white collar jobs? Academia?