🌻 AI populism's warning shots

the battles over AI are no longer just about the tech

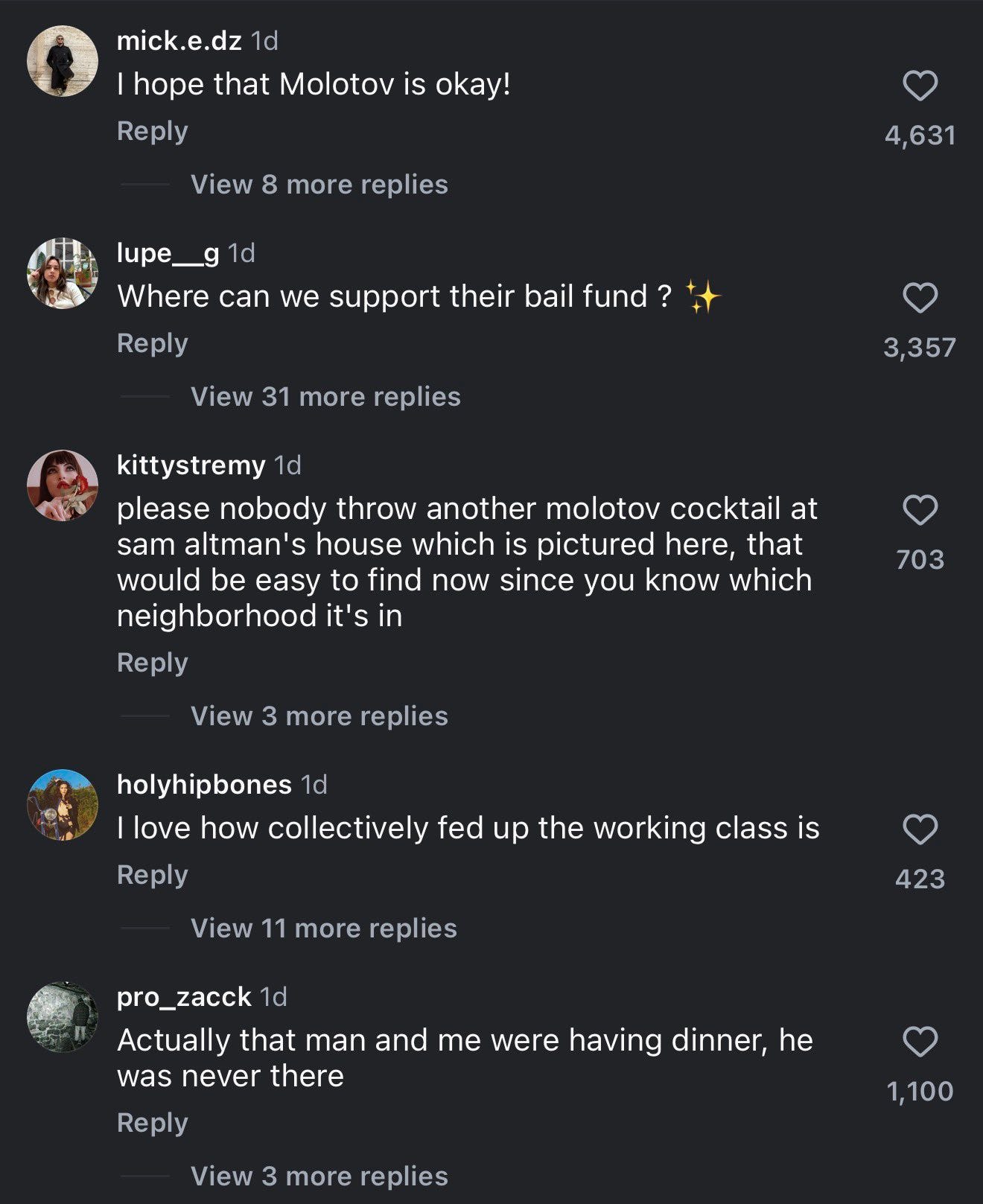

Sam Altman was targeted by two violent attacks in the last four days. First on Friday, when a man threw a Molotov cocktail into his home, and second yesterday, when two others shot at his door. Nobody was hurt in either case. Still, these acts are horrifying. Most of the AI safety intelligentsia—including some of Altman’s harshest critics—rightfully condemned the crime.

On Instagram, however, the reaction was different.

CEOs aren’t the only ones facing violent AI backlash. In Indiana last week, local councilman Ron Gibson woke up to 13 gunshots and a note reading “NO DATA CENTERS” at his doorstep. He had recently approved a new data center project in his district. At a planning commission meeting, he told the audience that it was expected to create 300 new jobs over the next three years—but these reassurances did not calm the boos.

I’d wager that incidents like these are only warning shots for how nasty AI politics will get.

In 2026, the politics of AI has a new meta: “caring a lot about AI” is no longer correlated with “knowing a lot about AI.” AI is rising in salience faster than any other issue among US voters. Politicians gearing up for the 2026 midterms and 2028 primaries won’t lag far behind. That means AI policy is no longer the remit of a few wonky technocrats. From now until forever, most people regulating, protesting, and talking about AI will not be interested in AI per se, but rather how it impacts their preexisting belief systems and political agendas. These forces are stronger, more diffuse, and more volatile than we have seen in AI policy before. And the curve is just about to shoot straight up.

I define AI populism as a worldview in which AI is viewed not only as a normal technology but as an elite political project to be resisted. It regards AI as a thing manufactured by out-of-touch billionaires and pushed onto an unwilling public to achieve sinister aims like “capitalist efficiency” (layoffs) and “population management” (surveillance). AI populists don’t really care whether ChatGPT is personally useful, or if Waymos eke out some safety gains: AI’s utility as a tool is immaterial relative to the unwelcome societal change it represents.

Among the public, AI populism shows up as individual attempts to block AI encroachment; for example, data center NIMBYism, AI witchhunts among creatives, and in the extreme, assassination attempts like what happened to Altman this week.

I don’t know what exactly motivated Altman’s assailants, of course, just as I don’t know what specific thing radicalized Luigi Mangione or Tyler Robinson. But the 20-year-old Molotov-thrower had joined a Pause AI Discord and penned a Substack post on existential risk, writing that AI executives are “sociopaths/psychopaths” and “gambling with your future and the lives of your children… These people are almost nothing like you.” We know less about the second set of attackers, except that they are also young: 23 and 25.

What seems likely is that the anti-elite and nihilistic attitudes that have dominated US political culture in the last few years are transmuting into anger at AI billionaires. Young people are particularly incensed. Gen Z already grew up in a world that they felt was shrinking, where grift and shitcoins and sports gambling looked like the only paths up. Now, they’re being told AI is the reason they can’t get a job—and potentially never will. Just as the United Healthcare CEO seemed like a justified target to many disillusioned and radicalized young people, so will AI executives be to many more.

Obstructionism, cancel culture, terrorism direct action: this is what politics looks like when faith in democratic institutions has collapsed.

(4/14/26 update: New information about the firebomber, Daniel Moreno-Gama, suggests he was specifically focused on A.I. safety and x-risk concerns. We don’t yet know more about Altman’s or Gibson’s other attackers.)

In the policy community, AI populism is just as impassioned, if more strategic and buttoned-up in approach. I first wrote about simmering populist sentiments when I visited Washington DC in February, sitting in closed-door meetings where representatives from opposing political tribes described the policy campaigns they were about to launch. “All the money is on one side and all the people are on the other,” I found. “We aren’t ready for how much people hate AI.”

The Overton widened further by the time I returned in late March. Within the span of a month, the DC conversation had shifted from “policymakers are mostly too paralyzed to act” to “policymakers are scrambling to design their AI agenda, now.” Every established interest group is now rushing to come up with an AI plan, from labor unions to environmentalists to social conservatives. I stopped hearing complaints about the lack of political will. The fact that OpenAI just published a surprisingly progressive policy whitepaper—one that floats higher corporate taxes, a 32-hour workweek, and which critiques the “concentration of wealth” in “firms like OpenAI”—is evidence of the shifting winds.

I am sympathetic to many of these concerns: we do need stronger national regulation around AI. Simultaneously, the unknown, unpredictable nature of AI also makes it a perfect political bogeyman. Because nobody knows exactly how fast AI is moving or where it’s headed—just that it’s really big—it becomes the perfect justification to slap onto whatever policy you already wanted passed. In this sense, “because AI” is the new “because China.” For Bernie, AI is a shiny new reason to implement single-payer healthcare or a billionaire tax; for the Pentagon, it’s a reason to lock in government control over private companies and their dual-use tech. This is a dynamic I didn’t properly appreciate: opportunism has always thrived in moments of turbulence and threat.

I have (many) opinions on what the AI community could have done differently in their public messaging—but I fear that ship has largely sailed.

One of Bernie Sanders’s viral anti-AI video missives (and corresponding WSJ op-ed) largely comprises of a supercut of AI executives quoted in their own words. By now, many people have heard how Silicon Valley leaders talk about human obsolescence and displacement and rogue AI risk. They may be speaking honestly, but comms strategies aimed at investors and recruits do not always sound great when in the public eye.1 (To AI’s opponents, Altman is seen as a two-faced liar—but to a lesser extent, so is Dario Amodei, for hand-wringing about workers while profiting from their replacements. And only more sophisticated AI followers know the difference between OpenAI’s and Anthropic’s policy stances anyway: many who I talk to toss them both under the banner of “Big Tech.”)

Those working on AI safety, meanwhile, focused nearly exclusively on existential risk for years at the cost of everyday concerns like mental health and jobs. I still remember telling safety folks in early 2025 that I was interested in labor impacts, only to be rebuffed with a dismissive “I don’t worry much about short-term harms.” As with the Pentagon situation, I’m not suggesting that their investment in technical alignment is wrong—rather, that the blind spot on sociopolitical alignment will come back to bite. Instability is not only a product of the model parameters but the social context it exists in. (Now some of these organizations have changed their tune, soliciting strange bedfellows to fight for a pause.)

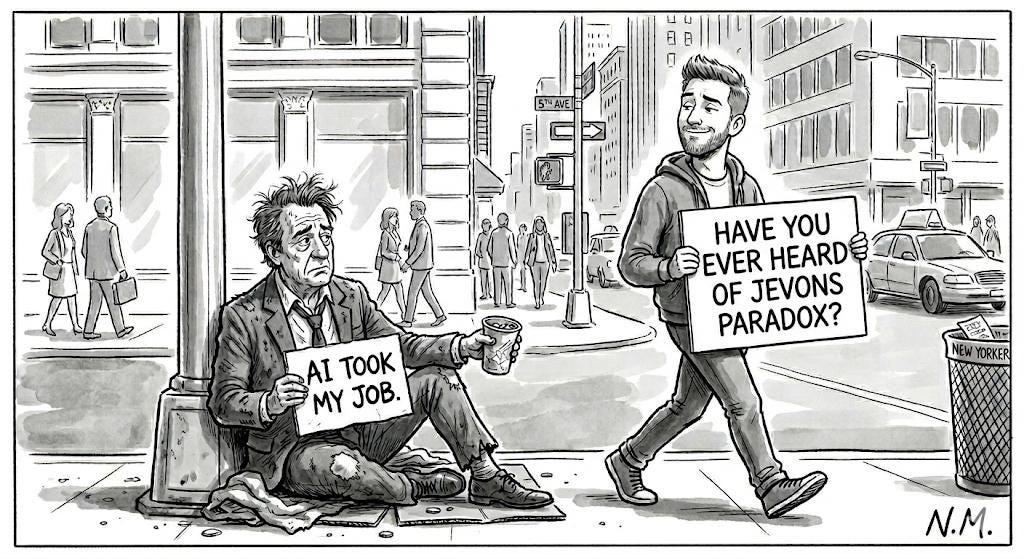

While Silicon Valley is finally waking up to its narrative failures, I wonder if they emotionally understand what’s going on. For example, my friends in AI complain that data center moratoria are dumb because they merely drive new construction to other countries (or outer space). They are probably right on the particulars, but that’s not the point. Most people don’t know anyone working at an AI company or on AI governance; they have no real agency to shape the trajectory of the tech. Talk of aggregate consumer surplus is scarce solace to an illustrator or cab driver losing their job. When people feel disempowered, they grasp at whatever leverage they can get.

Backlash is also the inevitable consequence of AGI-pilling the nation. Like okay, congrats, now everyone’s woken up to AI and its threat. Do we expect them to stay quiet and meekly accept what’s to come?2

I do not want to see more AI-related violence, but I do expect more storms ahead. In The Technology Trap, an excellent book on the political economy of automation from Carl Benedikt Frey, he makes a materialist argument based on centuries of historical examples:

The idea underpinning this book is straightforward: attitudes toward technological progress are shaped by how people’s incomes are affected by it. Economists think about progress in terms of enabling and replacing technologies. The telescope, whose invention allowed astronomers to gaze at the moons of Jupiter, did not displace laborers in large numbers—instead, it enabled us to perform new and previously unimaginable tasks. This contrasts with the arrival of the power loom, which replaced hand-loom weavers performing existing tasks and therefore prompted opposition as weavers found their incomes threatened. Thus, it stands to reason that when technologies take the form of capital that replaces workers, they are more likely to be resisted.

Automation always sows the seeds of its own resistance. Ordinary people may have fewer lobbying dollars, but they have other ways to escalate. That’s why I don’t think accelerationist steamrolling will work. The only way this conflict resolves peacefully is some kind of grand bargain: a flagship policy plan that directly addresses the public’s top fears about AI and meaningfully redistributes the gains.3 That, or giving people a worse disaster (WWIII?) to worry about. In a political environment this combustible, the laissez-faire approach seems doomed to fail.

links & life updates

I am now a contributing writer with The Atlantic. Excited to do more magazine stories alongside the Substack (subscribers get gift links, of course). As a result, I’ll experiment more with newsy posts like this that I write faster, but at the cost of pristine prose—especially when I’m focused on landing a bigger reported piece. LMK what you think.

On Wednesday, I did a very fun sold-out live podcast with the SF Standard on AI populism (plus some fun stuff, like checking in on my 2026 ins/outs list and AI social etiquette). You can listen below!

Anton Leicht deserves credit for being one of the first to write seriously about these political dynamics in AI (when it was not very popular to do so). This, on jobs and populism, and this, on AI safety movements, are good starting points.

Search Engine’s episodes on Waymo’s development and regulatory battles from PJ Vogt (and featuring Timothy B. Lee) were the best podcasts I’ve listened to all year. The second episode is also a good lens on the complications of AI populism: sometimes, “the people’s” side doesn’t actually include all people.

I’m headed to China again next week: Shanghai, Beijing, Shenzhen, Hangzhou. I’m especially interested in the state of robotics/embodied AI, and how Chinese people and policymakers are navigating AI’s social and economic impacts. It’ll be a packed trip, but do reach out if there are events/intros/recommendations I should know about!

Happy cherry blossom season,

Jasmine

Rationalists and EAs place an unusually high premium on being super honest and transparent, even when it incurs social cost. These norms cover everything from minor group house dramas to how CEOs should talk to journalists. I respect the honesty, but not the total disregard for audience. There are multiple ways to say a true thing. When the stakes are this high, I think that we could all pay more attention to framing honest, factual statements in more palatable or strategic ways.

I’m not saying that these violent acts are the fault of AI safety content or, for that matter, of critical journalism. But it should be an expected consequence—especially of the more inflammatory rhetoric. (See above footnote!)

No, I don’t know what this looks like; it’s an active research question I’m pursuing.

One nightmare is a future where we get AI that’s good enough to wreak social and economic havoc, but not yet good enough to cure cancer / solve climate change / deliver 10% GDP growth. In that world… who pays?

AI developing this way is a problem of capitalism, which is a problem of our political institutions breaking down. That's what has us barreling into a future that no one asked for and we never had a public conversation about.

AI rises within a society driven solely by market logic and whose most powerful leaders at worst don't care about normal people, and at best are indifferent their lives, the stability of their own society, or the future of the planet.

It's too narrow to talk of AI having a narrative problem -- the AI backlash exists in the historical arc of big tech -- social platforms, and disgust with business models built on extraction of our attention, data and fomenting of antagonism and anger.

The more informed among us may root for Anthropic over OpenAI -- but even if people less informed about these distinctions (them being new companies vs old big tech incumbents) and throw it all companies into the bucket of "big tech" -- these distinction aren't all that directionally important. The market logic wins, and we know corporate elites like the ones who own/run the technology care, even if they have moral compunctions about society impact, will be driven by the arms race of market logic to grow at all costs.

Only within a system where corporations become more powerful than governments can they roll up decades of our collective expertise, thought, and craft, to build tools to displace us with nearly no consequence -- this the same society that federally prosecuted Aaron Swartz for downloading articles from JSTOR without permission.

And at just the moment we need democratic governance to politically and socially harness AI, and re-orient socioeconomic policy and build a new new deal, we are governed by the most morally vacant and corrupt president and administration, probably ever. But beyond Trump people recognize that their lives just as much are governed by big tech and a handful of techno-oligarchs too.

So yea, I don't support violence, but the AI populism is justified.

Thanks for this insightful analysis.

It's kind of wild to me that AI executives didn't see this coming. They're excitedly proclaiming this technology will displace the livelihoods of *millions of people*. The idea you can do such a thing without consequence seems extremely naive. No amount of Flock cameras can protect you from that kind of backlash.

Sam's blog post on the topic was also very interesting to me. He opened it with a picture of his family, in what seemed like an appeal to his humanity. I'm sure I'm not the only one who saw that and thought, what about the families of all the workers who are being laid off and displaced?

Maybe that makes me an AI populist as well. Anyway, great article.