🌻 AI vs. the pentagon

the alignment problem is Pete Hegseth

Who would win in a fight: an alcoholic Fox host with a fetish for extrajudicial airstrikes, or a neurotic Italian-American physicist running an AI company worth $380 billion dollars?

Over the last few days, I’ve not been able to stop thinking about the insane Anthropic vs. Pentagon saga. This is arguably the most consequential AI news of the year so far: more than Claude Code, more than data center populism. I am supposed to be working on two non-Substack AI reporting projects1, but decided last night fuck it, emergency blog, let’s share some half-baked takes on the news.

I’ll start with a TL;DR of everything that’s happened. The whole thing plays out like a TV thriller, and I don’t blame anyone not keeping up. (Fellow situation monitorers, feel free to skip ahead to the analysis if you like.)

In July last year, Anthropic signed a $200 million contract with the Pentagon to provide access to Claude. Until recently, Anthropic was the only leading AI lab whose services could be used on classified networks. The company was eager to cooperate with the US military, even partnering with Palantir. But when Claude was used for the January capture of Nicolas Maduro, that allegedly miffed an employee inside Anthropic, though new reporting suggests that the dealbreaker was really the Pentagon’s plan to analyze commercial bulk data on Americans.2 A pissed-off Pete Hegseth wanted to make super sure that Anthropic was down for anything he wanted, citing “all lawful uses”—which under US military law, means basically whatever. And that was where things got messy.

The thing is, Anthropic’s original DoW contract included two exceptions for military use: their AI could not be used for domestic mass surveillance or fully autonomous weapons. But Hegseth ignored this, demanding that the Pentagon retain full discretion over how they use Claude. When Anthropic said no, he threatened to designate Anthropic a “supply chain risk”: a highest-tier national security designation usually reserved for companies like Huawei run by foreign adversaries. (Even Tencent and DeepSeek are not tarred with this label.) Anthropic was given a strict Friday 5pm deadline to comply with the DoW’s request.

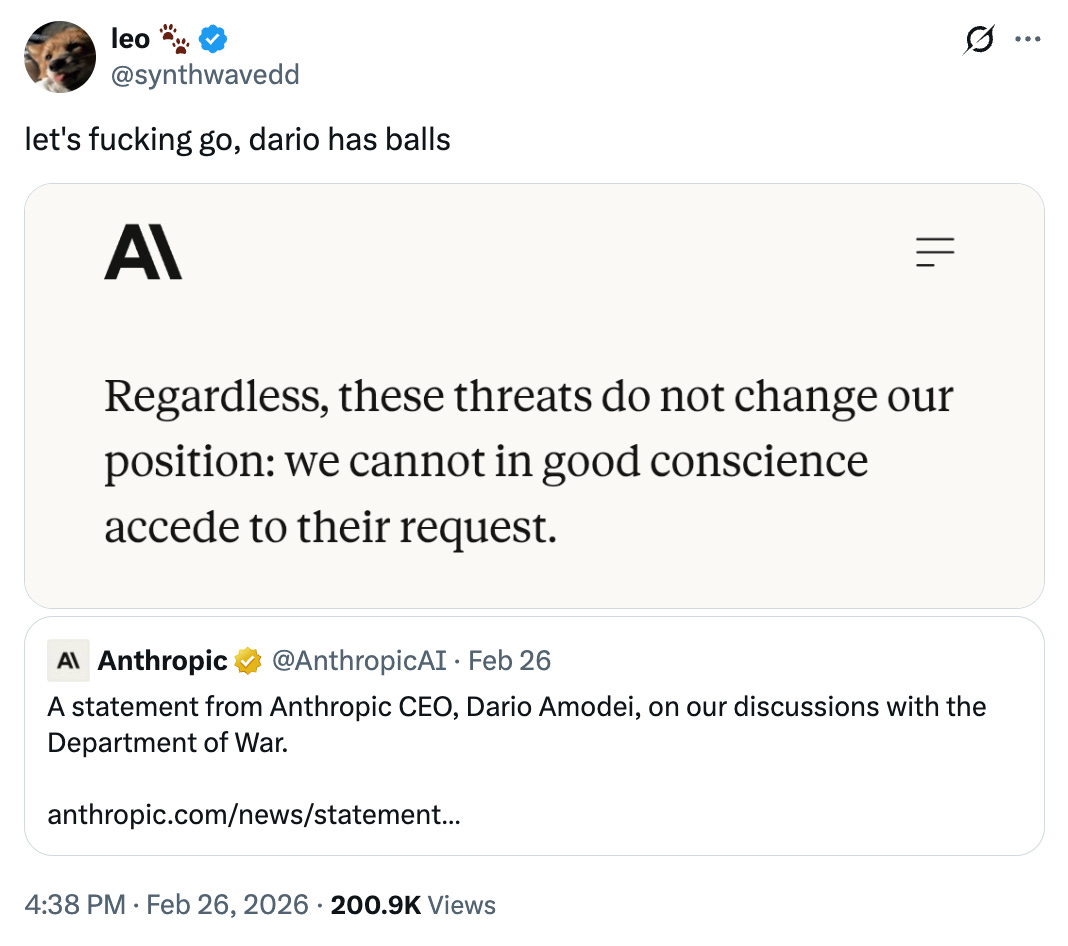

Days passed while Hegseth’s ultimatum hung in the air. Then, on Thursday, Dario Amodei published a statement: “These threats do not change our position: we cannot in good conscience accede to their request.” The AI community praised his courage. For a moment, there was celebration.

Well, Secretary Hegseth was not bluffing. He moved ahead with designating Anthropic a supply chain risk. In a long and dumb tweet, he calls the company’s behavior a “master class in arrogance and betrayal” and “a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives.” (He also uses the phrase “defective altruism,” which I must admit is pretty good.)

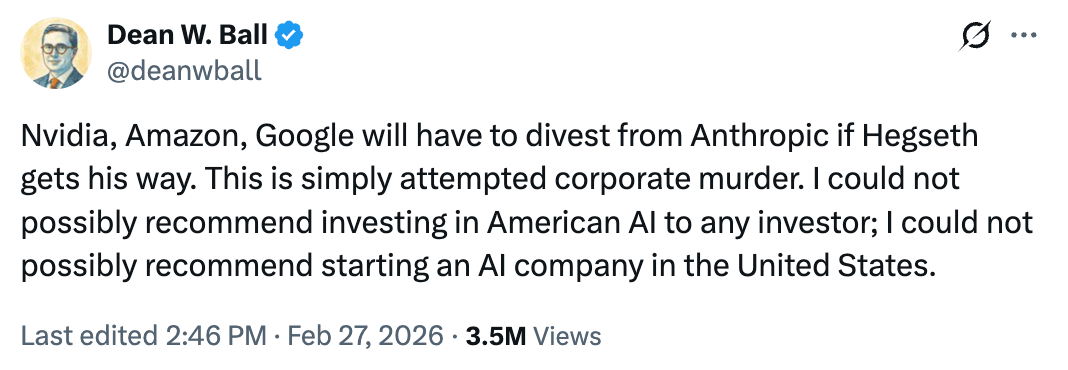

But the implications are severe. Hegseth implied that this would cut Anthropic from “any commercial activity” with US government suppliers: i.e. require NVIDIA, Google, and basically every other tech giant to stop transacting with Anthropic. (In reality, US supply chain risk law only applies to DoD contracts and procurement, not general private commerce.3) If this was merely about canceling the $200 million contract, that would be sort of understandable—I get why the DoW may not want to set a precedent for private companies setting the bounds of use. But the “supply chain risk” measure is just so, so extreme. As Kelsey Piper has emphasized, there is not a single constituency that should endorse this.

Then, late Friday night, Sam Altman swept in and made the confusing announcement that OpenAI will take the DoW contract while keeping the same two red lines as Anthropic—and offered to broker a truce with the other AI labs too. Crucially, OpenAI was willing to compromise by letting the Pentagon define what counts as “lawful” “mass surveillance” and “autonomous weapons.” That is: Altman seems to trust DoW discretion, and it’s not clear if OpenAI will hold separate red lines at all. His logic is that that a democratically elected government, not a private company, should define ethical use. “We are generally quite comfortable with the laws of the US,” tweeted Altman in his Saturday AMA.

That, today, is where we stand.

(Last updated on 3/1/26.)

Now I am no national security expert, but neither is Pete Hegseth. What we both are is media professionals. And that’s why I’ll make my wager about what’s actually going on.

Hegseth is making a spectacle of punishing Anthropic—just like ICE made a spectacle of videotaping each immigrant deportation, and just like the CCP made a spectacle of disappearing Jack Ma for criticizing Chinese regulators.

This has nothing to do with national security or antiwokeness or anything like that. It is about striking fear into the hearts of any person or company—no matter how wealthy—who dares cross the admin. It is rule by fear and deterrence and chilling effect. I don’t think it matters if the “supply chain risk” is ruled unlawful and gets knocked down in the courts. It is enough to cause public pain and make an example of Anthropic. There is no way that Dario Amodei has spent more brain cycles on anything else for weeks. And there is no way that his tech CEO peers aren’t themselves agonizing about how to make sure they aren’t next.

Hegseth is not behaving like a normal political actor. He is indulging in ego, intimidation, and dickwaving theatrics. Hegseth does not want to look like he can be micromanaged by Anthropic’s esoteric morality police; this “saving face” matters more to him than actually securing the country. Hence the deal with Altman, who unlike Amodei, is willing to kiss the ring. Altman shows up at Mar-a-Lago and calls Trump “incredible for the country.” In his announcement, he praises the DoW’s “respect for safety,” while Amodei called out their intimidation. Altman defers; Amodei doesn’t. These things matter. They show Altman can be worked with (or more cynically, controlled).

To be clear, I don’t think this is how any tech leader wants to work with the government. It doesn’t matter if you’re tech right, effective altruist, a profit-maxxing mercenary, whatever: it is terrifying that the US government may try to destroy your whole business if you set any requirements for how your products are used, and that federal contracts no longer mean things and can be ripped apart whenever. (It is terrifying for the public that the Pentagon is throwing this fit over the right to mass surveillance and killer bots.)

The question is—given how petty and personalistic Hegseth and Trump and co. are—whether American executives still have any choice.

Politics has always been one of Silicon Valley’s blind spots. Over the last ten years, in the wake of the techlash and social media and antitrust trials, tech leaders have realized how beholden they are to government power.

One response has been to influence politics from the inside. Tech has ramped up its lobbying, propping up pro-crypto and pro-AI PACs. In the 2024 cycle, the industry-wide move to donate to Donald Trump’s reelection and get Silicon Valley voices in the White House was a bet that tech money could buy them immunity. And this was partially effective in domains like loosening chip controls and crypto deregulation.

But I think that tech’s free market faithfuls overestimated the extent that their support could secure them against MAGA’s recklessness and dogma. There were early warning shots: little things like forcing Google to change their maps to “Gulf of America” instead of “Gulf of Mexico.” Then the infamous Liberation Day tariffs and crackdowns on H1-B visas, which most tech leaders balked at. (Personally, their surprise confused me: Trump was pretty clear while campaigning that he would do these two things.) Taking a 10 percent stake in Intel wasn’t popular either. And now this.

This is not a normal way for the US government to deal with US companies. I’ve dubbed the current paradigm “state capitalism with American characteristics.” Do what we say, or else we will kill you. Even Dean W. Ball, who authored Trump’s AI Action Plan, called Hegseth’s move “attempted corporate murder.”

If the Trump administration has a model here, it’s probably China. Xi’s CCP disappears billionaires like Jack Ma for acting too independent-minded and defiant of the regime. As part of a poverty reduction campaign in 2021, the government extracted tens of billions of “voluntary” philanthropic donations from China’s largest tech companies. It is not optional for DeepSeek to cooperate with Li Qiang’s demands. Chinese entrepreneurs never forget who really holds the reins.

But all these actions are now within the Overton for what the US government may do to American businesses. The logic is the same: Authoritarians do not like competing centers of power.

With AI and authoritarianism, the stakes are grave.

Amodei knows this better than most. In his 20,000 word opus “The Adolescence of Technology,” he warned about the risks of AI being misused by terrorists, dictators, and evil corporations. AI is the most powerful surveillance technology ever created—it can take any person’s social media posts and phone location pings, and identify who is doing exactly what and where. It can dox people from short writing samples or blurry photographs. It democratizes knowledge, including dangerous knowledge, like the ability to build bioweapons. With AI, we can manufacture false images, voices, and videos that are indistinguishable from real ones. In addition to these risks, AI is delivering shocks to our labor markets, education system, and mental health; chipping away at a social fabric that’s already wearing thin.

Yet the most underbaked part of Amodei’s essay was his discussion of AI and democracy. He says that he will ensure AI is democratic by putting it in the hands of democratic countries (subtweet: the US) and keeping it away from autocratic ones (subtweet: China), while restricting cases where the AI “would make us more like our autocratic adversaries.” (subtweet: the US under Trump). This is a narrow, wobbly tightrope to walk. On the Dwarkesh Podcast, he doubles down: Maybe AI has “inherently has properties” that have a “dissolving effect on authoritarianism,” Amodei muses. We don’t know what, or how to get this democratic AI to citizens but not their masters, and similar hopes failed with regards to internet and social media… but also maybe AI will be different, so it’s “worth a try.”

I use Claude a lot, but haven’t noticed any “inherent properties” for “dissolving” authoritarianism. Reserving AI use to nations in the crude bucket of “democratic” does not guarantee, as we see, that its applications will be just. (Also, Anthropic seems willing to build killer robots and do mass surveillance, just only once the models are a lot better than Opus 4.6, and only on countries besides the US.)4

Coming from an otherwise thoughtful and appropriately paranoid guy, Amodei’s logic here just seems naive. It’s hard to control a technology once you release it upon the world. If Anthropic doesn’t trust the military personnel it sells to, they’ll never feel assured that their products won’t be misused. Or take the internet: the same technology enables Chinese feminist activists to find each other, American officials to spread lies about peaceful protesters, and every use case in between.

On the default path, I think AI will do more to threaten civil liberties than to preserve them. This is not just the companies’ fault, nor is it inherent to the tech, but it’s rather a product of the stormy seas they swim in. Rapid AI progress, a decaying social fabric, and authoritarian backsliding are not a fun combination.

So the more pressing AI alignment problem is the sociopolitical one: the fact that something as seemingly simple as intent alignment gets complicated when you don’t know whose intents are prioritized. Quoting from my 2025 post:

There is a world where AI labs figure out interpretability and steerability (woo!), but still introduce tremendous risk because they are subject to other incentives—the market, a nation-state, a conniving CEO—that aren’t aligned with our own.

Consider the recent Grok incident, where X’s built-in AI suddenly developed an obsessive and unshakeable fascination with “white genocide” in South Africa… Grok was aligned with its owner, Elon Musk, but not with X users trying to ask it innocuous questions. This was laughable but instructive. Whether steered by corporate profit or government fiat, an intent-aligned AI system can still deliver a drug advert to an addict or a bomb to a civilian target.

Many AI researchers are overly focused on risks from model misalignment, and will be in for a rough surprise when havoc arises from other layers of the stack. Especially because AI companies are incentivized to spend tremendous resources making models more controllable, but disincentivized to pursue mechanisms that limit their own market power. (Nuclear weapons did not need instrumental convergence to destabilize the world. Perhaps OpenAI’s greatest alignment problem was between their nonprofit charter and their Microsoft deal.)

If AI’s particular impacts depend on whose intent it’s aligned to, folks who care about tech should care about political and social stability too. The best thing for both AI progress and AI safety was probably “not electing Donald Trump.” Yet in the 2024 election cycle, too much of Silicon Valley—enamored with the promise of a founder-mode president who could shake things up—dismissed the benefits of boring institutions and rule of law. And they ushered in a pack of erratic ideologues who care for nothing but their pride and the viral video clips they generate.

We don’t have to repeat those mistakes. Ten years after the techlash, after peak woke and the vibe shift right, Silicon Valley is undergoing a moral reckoning yet again. Prominent elder statesmen are loudly critiquing Trump. Anthropic’s principles are winning the vibes race among users and recruits. Employees are passing petitions around Signal chats. This generation of tech industry advocates seems savvier, more moderate, and more strategic about their demands. It’s good to see the courage, even while I’m not sure who will win. Once you’re reactive and on the back foot, it’s hard to gain the upper hand.

People working in AI still have disproportionate leverage over the future. That’s what attracts so many researchers to the field: more than money, more than clout, it’s the chance to make a dent.5 Our economy, security, and culture are riding on this one industry. Meanwhile, the vast majority of the public feels like there’s nothing they can do in the face of AI change.

So at the risk of sounding preachy: this privilege is a duty, and I hope you use it well. Think about the midterms; spend your money if not your time. Consider what you can build and say from the inside. You don’t have to be rash; careful strategy is good. But remember that progress is not a scientific guarantee. Better technology alone does not automatically lead to human flourishing—even great capabilities need a friendly environment in which to diffuse. A vaccine is only as good as the number of people who get it; task automation is more fun when you sit above the API. Politics is dumb and messy but it also rules our lives.

In the same interview, Amodei proffered one more theory for how AI might save democracy. Perhaps AI would make authoritarianism so “unworkable” that “people are more afraid” and have a “collective reckoning” about our rights. And when the authoritarian crisis peaks, we’ll “find another way.”

Well: I hope he’s right. Now is our chance.

If you work at an AI company—especially Anthropic, OpenAI, Google DeepMind, or xAI—my signal is jws.27. I would love to hear what you think, and what I’m missing in this perspective. My policy is that we are never on the record unless we say so: I’m not looking for scoops, but focus on staying informed so I can represent a nuanced, accurate picture of how people in AI think about their work. I’d also argue that speaking to trusted journalists (whether me or someone else) is an underrated way of having public impact. Savvy media professionals can say aloud the things that you can’t, and a lot of people with power still read the news.

Here are a few other perspectives I liked on Anthropic and the Pentagon, from across the political spectrum:

Henry Farrell on why all tech CEOs should fear this precedent

Jack Shanahan (who led Project Maven from the DoD) on what makes this different

Jordan Schneider, Eric Robinson, Tony Stark, and Justin Mc on military-civil fusion

Sarah Shoker (previous Geopolitics lead at OpenAI) shares context on AI and military use

There is also a DoW open letter going around for general tech industry professionals. You can sign it here. I don’t know if the DoW will care, but it’s useful to show that many in Silicon Valley share the same goals.

Stay strong,

Jasmine

One article on AI writing and one article on AI labor impacts! I’m particularly keen to talk to people at AI companies, political offices, and other organizations researching AI’s economic impacts for the latter. My signal is jws.27 and my email is jaswsunny@gmail.com.

Multiple people at Anthropic have said that this never happened and was a fake leak to Semafor. They might be right, but I don’t think it’s possible for me to adjudicate what really happened.

I made a correction to clarify that this, while Hegseth’s stated intent, is not actually what a supply chain risk can lawfully mean. (The sentence initially said “This designation would require NVIDIA, Google, and every other tech giant doing business with the US government to stop transacting with Anthropic. In other words: Hegseth wants to shut down Anthropic’s business because Amodei won’t cut his red lines.”)

I like Vitalik Buterin’s concept of “defensive accelerationism”: we ought to build more of the technologies that are defense-dominant, that protect people’s liberties, while being extremely cautious of accelerating tech that has the power to oppress. Examples of defensive technologies include SynthID, cybersecurity and cryptography, mRNA vaccines, gate-busting institutions like Substacks. On the other side: bioweapons, cyberweapons, surveillance, deepfakes.

Still, most technologies are dual-use, and what’s most important to me is preserving a stable democratic system for innovation to happen within.

I don’t know how long this leverage will last, given that the labs are trying to automate AI research first.

Since I live in Minneapolis, I'd say we understand AI's risks to democracy better than Dario, given that AI has already been used to identify and map not only ICE targets but also to track protestors and journalists within the US. The pro-AI internet writers seemed to miss when this was actively happening earlier this year, and I'm not sure why because it made the national news. Since I'm old enough to remember decades of discussions about the PATRIOT act, the use of Palantir's AI-enabled tools to track citizens has been as shocking as any other revelation from Operation Metro Surge.

The logic that anything Hegseth does is a media event is a bit daft to me, since he is not currently a media professional. He is actively hurting people in a multifront war.

Like many other Trump officials, he is extremely familiar with media cycles, but nothing he is doing is unique. Every senior official in the administration is deeply savvy about the current state of how tech and media interact to keep them in power. I mean, they built their own arsenal of influencers, media companies and social networks to promote their own authoritarian actions and narratives. They are not doing media; they are doing textbook 20th century totalitarianism applied to 21st century media systems.

In no way are you like Pete Hegseth, Jasmine. You downplay what is sinister and violent in hopes it will turn out ok, which is very sweet but also not particularly grounded in the reality of the current moment.

Regardless, I'd encourage workers in AI not to just think about elections, but to engage in loud and active conversation-- and maybe even indulge in some activism-- regarding how the United States is currently using AI tech in all the worst ways. They are taking the paycheck, so they have a responsibility to chew on this morality and use it to guide their actions as adults all the time, not just every couple of years in November.

Jasmine I have been waiting so eagerly for this post