🌻 why LLMs are bad writers but good editors

my new Atlantic essay + Claude editor setup

There’s a weird asymmetry between how tech people talk about AI’s incredible technical prowess and its attenuated capacity for art. Sam Altman has predicted that large language models will soon be capable of “fixing the climate, establishing a space colony, and the discovery of all of physics,” yet in an October interview with Tyler Cowen, guessed that even GPT-7 might be able to extrude only something equivalent to “a real poet’s okay poem.” Cowen himself is sunnier on LLM poetry, but not on visuals. In his “New Aesthetics” grant, co-funded with Patrick Collison (also an AI writing skeptic), the two note that “we haven’t seen much great work that only uses AI.” Neither Altman nor Cowen nor Collison is known for either understatement or techno-pessimism. So—what gives?

I tumbled down an investigative rabbit-hole to answer this question: Why don’t large language models model language very well? Is it something about the way models are trained? The companies’ business priorities? Consumers’ bad taste? Or is literature really that special? I talked to a slew of researchers, engineers, authors, and data labelers; and tinkered relentlessly with the models myself. In my new essay for The Atlantic, I argue that the answer is something like D: All of the above. I think you should read the whole thing, but to boil it down to three brief reasons:

“Good writing” is hard to train because it’s hard to evaluate.

“Good writing” is not a business priority, so other post-training goals get in the way.

“Good writing” requires a grounding in real-life experience.

Reason #1, the verifiability problem, is the most commonly cited. Art is subjective; beauty is in the eye of the beholder, as they say. But over the course of reporting, I became convinced that this barrier may be the easiest of the three to overcome. Yes, current writing evals are mostly dumb: one Scale AI “Writing Evaluator” I talked to was asked to count exclamation marks for tone and grade fanfiction on its “factuality.” But no one’s forcing the labs to have philistines write the rubrics. In theory, a much more sophisticated expert could do the job.

That’s why I was fascinated by this 2023 paper from Tuhin Chakrabarty, a CS professor at Stony Brook, which attempts to establish concrete measures for literary creativity. Chakrabarty—who is doing some of the most interesting research in this field—enlisted a group of writing experts (literary agents, professors, and MFA candidates) to develop a rubric for New Yorker-style short stories. They came up with a list of 14 criteria, such as:

Does the writer make the fictional world believable at the sensory level?

Does the story contain turns that are both surprising and appropriate?

Does each character in the story feel developed at the appropriate complexity level, ensuring that no character feels like they are present simply to satisfy a plot requirement?

Does the story operate at multiple “levels” of meaning (surface and subtext)?

Chakrabarty then used this rubric to answer two questions: First, can creative writing be evaluated objectively and reliably? Second, how good are LLM-written short stories?

The answer to the latter was “not very,” to nobody’s surprise. LLM short stories were scored consistently worse than the human writers. But I was much more interested in the first question of whether creativity could be measured. And they proposed that the answer was yes. In a blind experiment, both human expert and LLM judges achieved high levels of consensus in grading stories with the rubric. Judges agreed on even fuzzy qualities like “believability,” “originality,” and “characterization.” Perhaps, they imply, literary quality might be measured—and thus trained for—after all!

Now, I am pretty adamant that good nonfiction ought to be grounded in life. I do not aim to be the sort of writer who churns out essays from a closet, doing discourse about discourse and never leaving the house. For me, “reporting” and “ethnography” and “being in the world”—talking to people in person, seeing stuff with my eyes, condensing those visceral experiences into black-and-white text—is inextricable from my ability to say interesting things.

Editing, however, is a different job. Great human editors tend to be curious polymaths with good taste, low egos, and the therapeutic talents to elicit a neurotic writer’s best work. Their knowledge can be broader than they are deep, and they don’t need to cultivate a distinct voice of their own (though many do happen to be excellent writers themselves). That is closer to a role that LLMs can play.

One problem: today’s AI chatbots don’t come with good taste. The factory settings are designed for a sycophantic corporate assistant, not a creative genius. But the Chakrabarty paper shows that smart people can articulate their taste, then teach it to an AI. An LLM may not be able to write a good story yet, but it can already evaluate them.

That’s the philosophy I’ve applied to building my AI editor. I created a custom rubric and editing scaffold to guide Claude’s writing feedback. I have a good amount of professional editing experience, so this meant translating my tacit process into something systematic and automatable—I don’t think I could do it if I didn’t trust my voice.

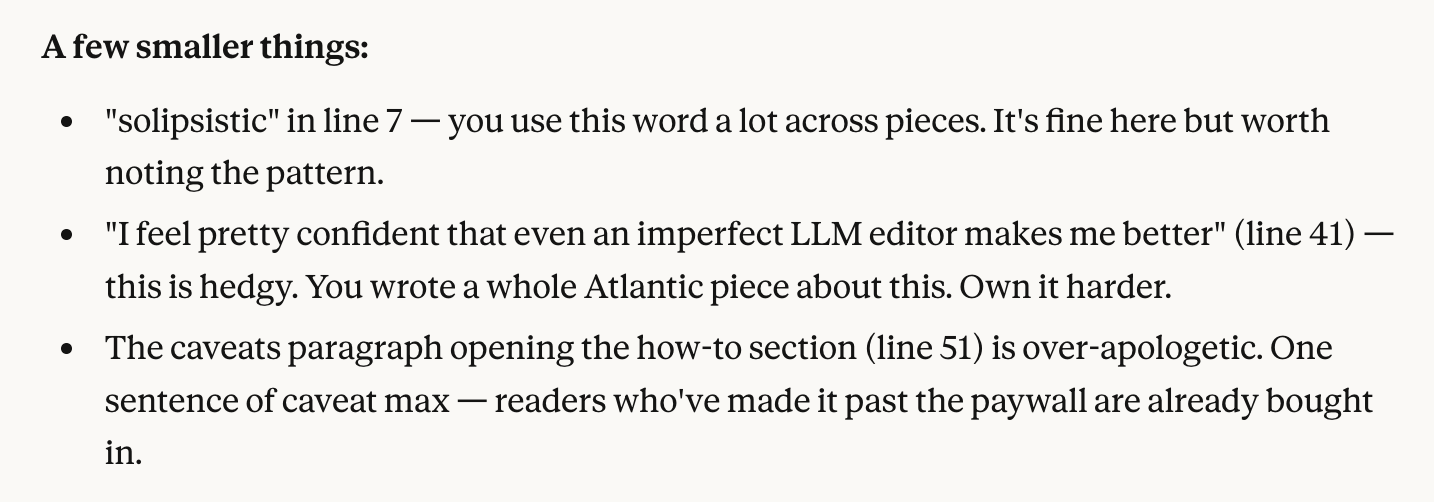

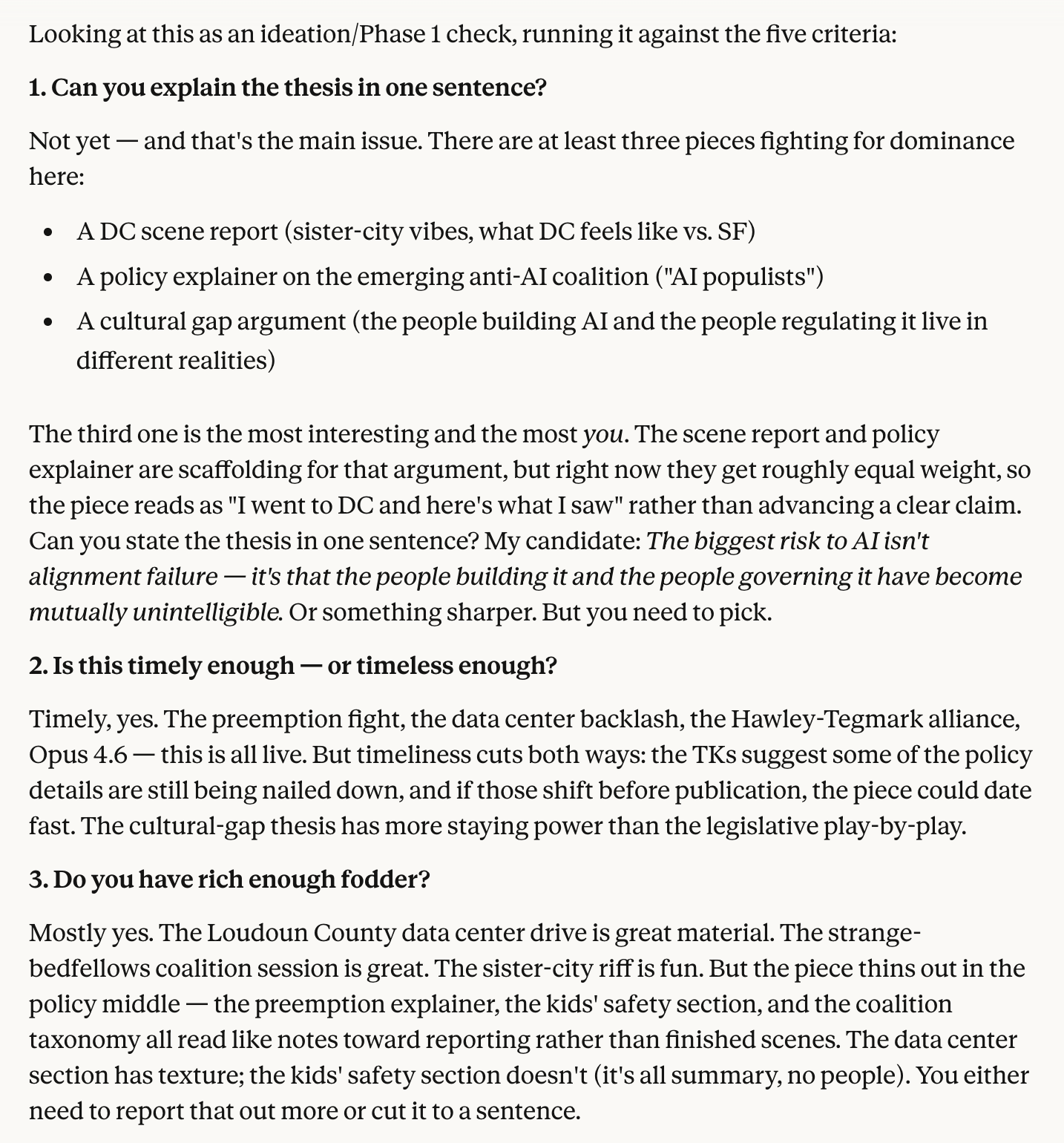

And it worked. The resulting tool is as good as many human editors I’ve had, and vastly beats the counterfactual of running loops in my own head when drafting Substacks at 3am. The whole thing took a bit of setup but no code. And now I have on-demand access to feedback like this:

I thought these comments were pretty good!

Unlike the variously disappointing editing apps I’ve tried, which still try to shove prose into their own rigid standards, the big unlock here was teaching the LLM my personal taste before asking it to judge. This made Claude’s suggestions much more useful—you’ll notice that it refers to my past writing and stated goals. Whereas Claude used to tell me to scrub my essays of language that read “too casual,” the new customized editor acknowledges slang as part of my voice. And rather than inventing scenes for me, it points out places where my reporting is thin.

I’ve been talking nonstop about this project, and several writer friends have asked for details about my approach. So below the paywall, I share step-by-step details about my specific process, prompts, and criteria for creating your own AI editor; plus links to my favorite recent reads.

(No human editors were harmed in the making of this tool. I freelance largely for the opportunity to learn from different editors, with their own tastes and collaborative approaches. But I wasn’t going to hire one for my Substack anyway.)

Updates: I went on the excellent Hard Fork podcast to talk AI and writing. I previously debated Robin Sloan on this topic. On April 9 in SF, I’m doing a live podcast on the politics of AI: populism, the Pentagon, DC vibes, etc. Come!